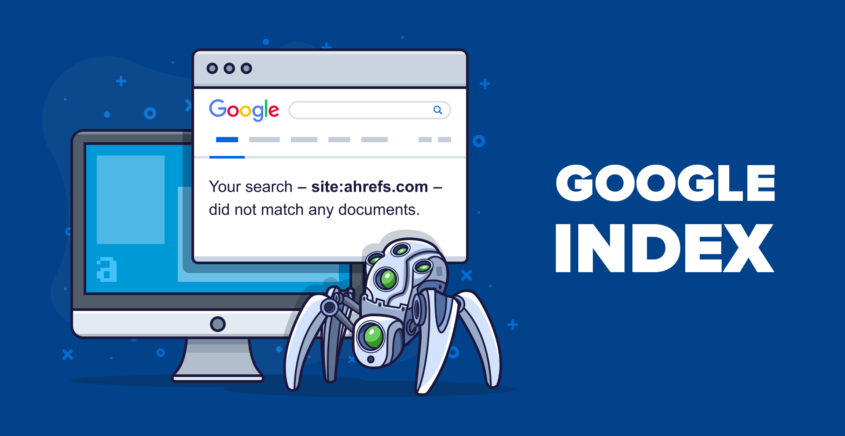

Fix indexing problems and increase visibility on Google SERP

Fix indexing problems on your website and increase visibility on Google SERP

The visibility of your website on Google’s Search Engine Results Page (SERP) plays a crucial role in attracting organic traffic and driving online success. However, for your website to appear prominently on the SERP, it needs to be effectively indexed by search engines like Google. Indexing ensures that your website’s pages are properly analyzed, categorized, and included in search engine databases. In this article, we will explore the significance of indexing and visibility, as well as provide practical strategies to fix common indexing problems. By optimizing your website’s indexability, you can significantly enhance its visibility on Google SERP, leading to increased organic traffic and improved online presence.

1. Introduction: Understanding the importance of indexing and visibility on Google SERP

– The role of indexing in search engine optimization

When it comes to search engine optimization (SEO), indexing plays a crucial role. Indexing is the process by which search engines like Google discover, analyze, and store information from web pages. Without proper indexing, your website is like a hidden gem lost in the vast internet landscape.

– Why visibility on Google SERP matters for website success

Visibility on Google SERP (Search Engine Results Page) can make or break your website’s success. When users search for relevant keywords, having your website appear prominently in the search results increases your chances of attracting organic traffic. People rarely venture beyond the first page of search results, so if you want your website to be seen, you need to focus on improving your visibility on Google SERP.

2. Common indexing problems and their impact on website visibility

– Unindexed or partially indexed website pages

Having unindexed or partially indexed website pages is like having invisible content. If search engines can’t find and understand your pages, they won’t appear in search results. This means missed opportunities to attract visitors and potential customers.

– Crawling errors and HTTP status codes

Crawling errors and HTTP status codes can hinder search engines from properly indexing your website. When search engine bots encounter errors while crawling, they may not be able to access certain pages, resulting in incomplete indexing. Common HTTP status codes like 404 (page not found) can negatively impact your website’s visibility.

– Server and hosting issues affecting indexing

Your website’s server and hosting play a role in indexing too. Slow server response times or downtime can discourage search engine bots from crawling your site effectively. If search engines can’t access your pages consistently, your visibility can suffer.

3. Optimizing website structure and navigation for better indexing

– Importance of a well-organized website structure

A well-organized website structure is not only beneficial for users but also for search engines. Clear categories, logical hierarchy, and clean URLs make it easier for search engine bots to crawl and understand your website. A tidy structure improves indexing efficiency, leading to better visibility.

– Designing user-friendly navigation menus

User-friendly navigation menus are essential for both user experience and indexing. When visitors can easily navigate through your website, search engines can also follow the links to discover and index all your important pages. Make sure your menus are intuitive, accessible, and mobile-friendly for optimal indexing.

– Implementing internal linking strategies

Internal linking is like a roadmap for search engine bots. By strategically linking your web pages together, you guide search engines to important pages that might otherwise be missed. Incorporating relevant internal links throughout your content enhances indexing and improves the visibility of interconnected pages.

4. Resolving technical issues affecting website indexing

– Fixing broken links and redirects

Broken links and redirects can confuse search engine bots and negatively impact indexing. Redirects should also be properly implemented to ensure that search engines can follow them and index the correct destination pages.

– Addressing issues with JavaScript and CSS files

JavaScript and CSS files are essential for website functionality and design. However, if search engine bots can’t access or understand these files, it can hinder proper indexing. Ensure that your JavaScript and CSS files are optimized and crawlable to avoid indexing problems.

– Handling Flash and iFrame content for better indexing

While Flash and iFrame content may have their uses, they can be problematic for search engine indexing. Flash content is often not accessible to search engine bots, and iFrames can present challenges as well. Consider alternative methods or provide textual alternatives to ensure better indexing and visibility.

Now armed with an understanding of common indexing problems and solutions, you can take steps to improve your website’s visibility on Google SERP. By addressing these issues and optimizing your website’s structure and technical aspects, you can increase your chances of attracting organic traffic and achieving online success. Remember, a visible website is a thriving website!

5. Creating and submitting a sitemap to improve search engine visibility

– Understanding the purpose and structure of a sitemap

Sitemaps are like a roadmap for search engines, showing them the structure and hierarchy of your website’s pages. They help search engines understand your website better and improve its visibility on the search engine results page (SERP). A sitemap typically includes important information such as the URL, last modified date, and priority of each page.

– Generating a sitemap using XML sitemap generators

That’s where XML sitemap generators come to the rescue. These tools crawl your website and automatically generate a sitemap for you. They ensure that all your pages are included and provide you with a clean, error-free sitemap that you can submit to search engines.

– Submitting the sitemap to Google Search Console

This step ensures that Google is aware of your website’s structure and can index your pages correctly. Simply log in to your Search Console account, navigate to the Sitemaps section, and submit your sitemap URL. Google will then start crawling and indexing your pages more efficiently.

6. Leveraging robots.txt to control indexing and crawling behavior

– Introduction to the robots.txt file

The robots.txt file is like a traffic cop for search engine bots. It tells them which pages they can crawl and index and which pages they should avoid. By using the robots.txt file strategically, you can have more control over how search engines interact with your website.

– Excluding pages from indexing using robots.txt

If there are certain pages on your website that you don’t want search engines to index, you can specify them in the robots.txt file. By disallowing specific URLs, you can prevent search engines from wasting their time crawling irrelevant or duplicate content.

– Testing and validating robots.txt directives

To ensure that your robots.txt file is correctly set up, it’s crucial to test and validate its directives. You can use the robots.txt testing tool in Google Search Console to check if your file is blocking the intended pages and allowing access to the necessary ones. This way, you can verify that your indexing and crawling behavior aligns with your website’s goals.

7. Utilizing canonical tags to avoid duplicate content issues and enhance visibility

– Understanding duplicate content and its impact on SEO

Duplicate content can harm your website’s SEO efforts. When search engines encounter identical or very similar content on different URLs, they may struggle to determine which version is the most relevant. This can lead to a dilution of your website’s visibility on the SERP.

– Implementing canonical tags to consolidate content signals

Canonical tags are HTML elements that help search engines identify the preferred version of a webpage when duplicate content exists. By adding a canonical tag with the preferred URL to all the duplicate pages, you’re essentially consolidating the content signals and directing search engines to index and rank the chosen page.

– Verifying canonical tag implementation using Google Search Console

After implementing canonical tags, it’s essential to verify that they are working correctly. By using Google Search Console, you can check if the canonical tags are set up properly and if Google is recognizing your preferred URLs. This step ensures that search engines are treating your duplicate content as intended, enhancing your website’s visibility.

8. Monitoring and maintaining website indexing performance for long-term success

– Regularly checking website indexing status

To ensure the long-term success of your website’s visibility, it’s important to regularly check its indexing status. By monitoring how search engines are crawling and indexing your pages, you can identify any issues early on and take appropriate action to resolve them promptly.

– Implementing ongoing SEO practices to improve visibility

Fixing indexing problems shouldn’t be a one-time task. Implementing ongoing SEO practices is crucial for maintaining and improving your website’s visibility. This includes optimizing your content for relevant keywords, improving website speed and user experience, earning quality backlinks, and staying updated with the latest SEO trends and algorithm changes. By continually investing in SEO efforts, you can secure long-term visibility on the Google SERP.In conclusion, addressing indexing problems is crucial for improving the visibility of your website on Google SERP. By implementing the strategies outlined in this article, such as optimizing website structure, resolving technical issues, creating a sitemap, leveraging robots.txt, and utilizing canonical tags, you can enhance your website’s indexability and increase its chances of appearing higher in search results. Remember to regularly monitor and maintain your website’s indexing performance to ensure long-term success. With a well-indexed and visible website, you can attract more organic traffic, reach a wider audience, and achieve your online goals.

FAQ

1. Why is indexing important for my website’s visibility on Google SERP?

Indexing is the process by which search engines like Google analyze and include your website’s pages in their search results. When your website is properly indexed, it has a higher chance of appearing on the SERP, increasing its visibility to potential visitors. Without proper indexing, your website may not show up in search results, leading to lower visibility and reduced organic traffic.

2. What are some common indexing problems that can affect my website’s visibility?

Common indexing problems include unindexed or partially indexed pages, crawling errors and HTTP status codes, and server or hosting issues that prevent search engines from accessing your website. These issues can hinder your website’s visibility on Google SERP, as search engines may not be able to properly analyze and include your web pages in their search results.

3. How can I optimize my website’s structure and navigation for better indexing?

To optimize your website’s structure and navigation for better indexing, focus on creating a well-organized website hierarchy, designing user-friendly navigation menus, and implementing internal linking strategies. A clear and logical website structure helps search engines understand the relationship between different pages, while user-friendly navigation makes it easier for both search engines and visitors to explore your website.

4. How can I monitor and maintain my website’s indexing performance?

Monitoring and maintaining your website’s indexing performance is essential for long-term success. Regularly check your website’s indexing status using tools like Google Search Console, monitor crawl errors, and promptly fix any issues that may arise. Additionally, consistently implementing SEO practices, such as creating fresh and engaging content, optimizing meta tags, and building high-quality backlinks, can also contribute to maintaining a strong indexing performance.

If you want to build your website in an affordable price contact: www.nextr.in

Read this: How To Gain Popularity On Instagram: Step-By-Step Tips?